DeepFake Detection: Radiology Edition

Easier said than done.

Generative AI image generation has become sophisticated enough as to blur the line between “real” and “fake” at first glance. Social media is replete with images (and videos) misrepresenting reality – and, fortunately, many fail under closer inspection as fine details are distorted or nonsensical.

But, within narrow use cases, distinguishing between “real” and “fake” becomes much more challenging – and, it would seem, plain radiographs are one of those instances.

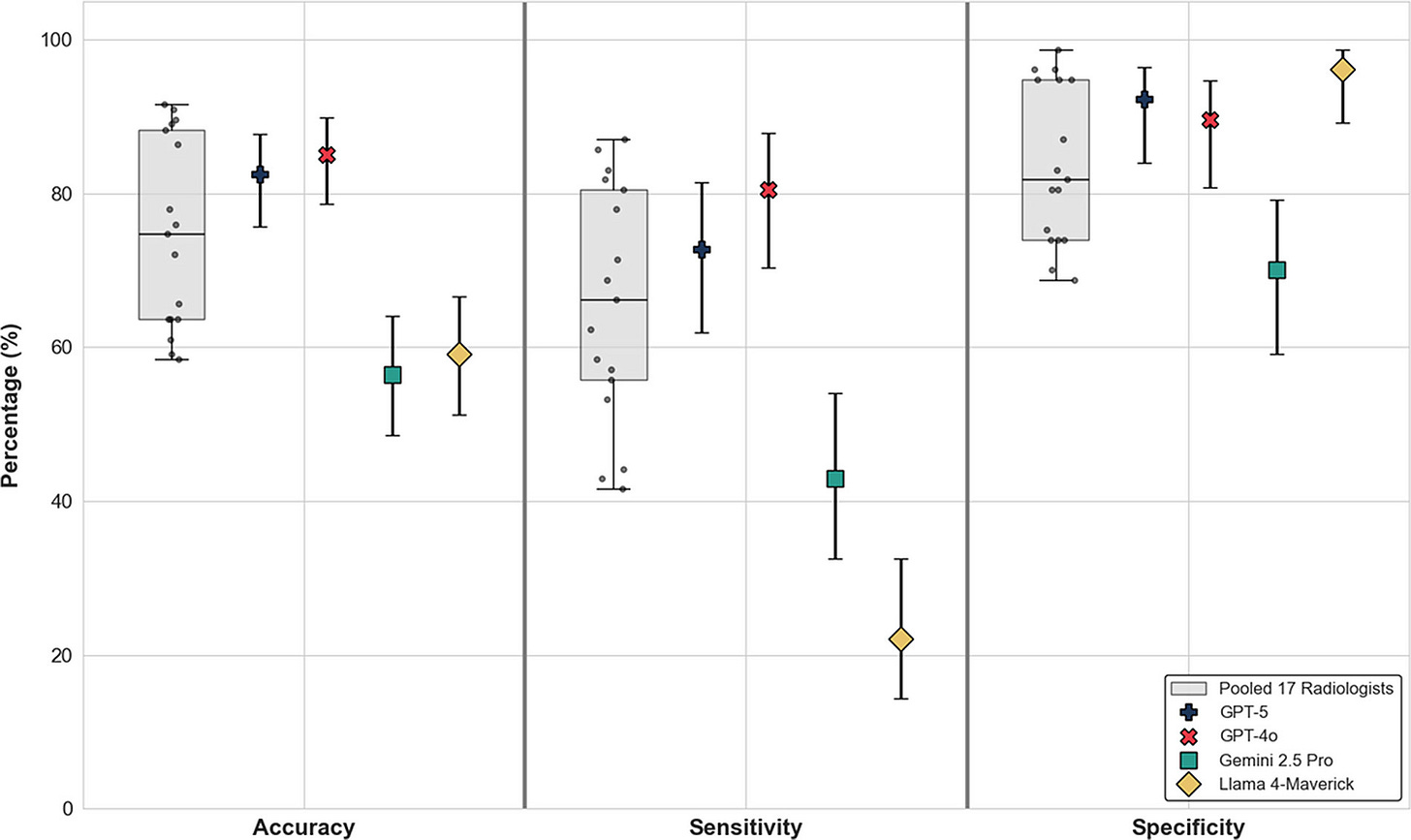

This study used GPT-4o and RoentGen to generate synthetic anatomic and chest radiographs, respectively, and then mixed these radiographs with similar matched authentic pairs. Seventeen radiologists were sampled to view and classify the radiographs as real or fake, as well as running these same images through four LLMs as a comparison.

So far, the deepfakes aren’t winning – but enough are slipping through:

When a radiograph appeared obviously synthetic, radiologists and LLMs alike were usually correct. However, a good number of synthetic ones – 60 to 80% – slipped through undetected, and GPT-5 and GPT-4o were the only ones with performance up to or exceeding human perceptions.

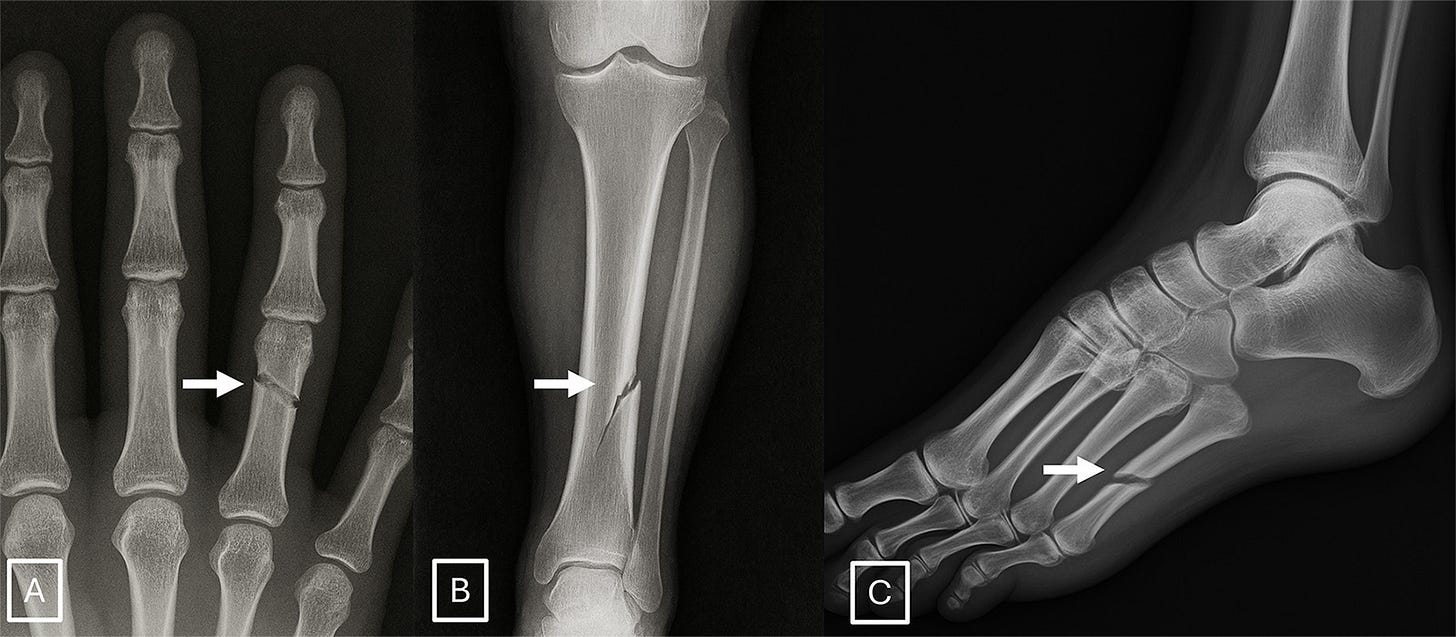

Qualitative surveying of the radiologists after the initial phases of the study indicated certain features were more suggestive of synthetic radiographs: absence of noise, overly-smooth cortex, and unnatural-appearing fracture patterns. A few examples:

There isn’t a dramatic earthshaking takeaway from these examples. The editors suggest issues of potentially fraudulent image generation and contamination of training sets with synthetic data. These are real issues to consider, but nothing that stands radiology on its head.