The "Emergency Medicine Is Dead" AI Demonstration

The AI will never miss a diagnosis!

It’s another round of clickbait breathless hype:

From George Clooney in ER to Noah Wyle in The Pitt, emergency department doctors have long been popular heroes. But will it soon be time to hang up the scrubs?

A groundbreaking Harvard study has found that AI systems outperformed human doctors in high-pressure emergency medicine triage, diagnosing more accurately in the potentially life and death moments when people are first rushed to hospital.

And here’s the proof:

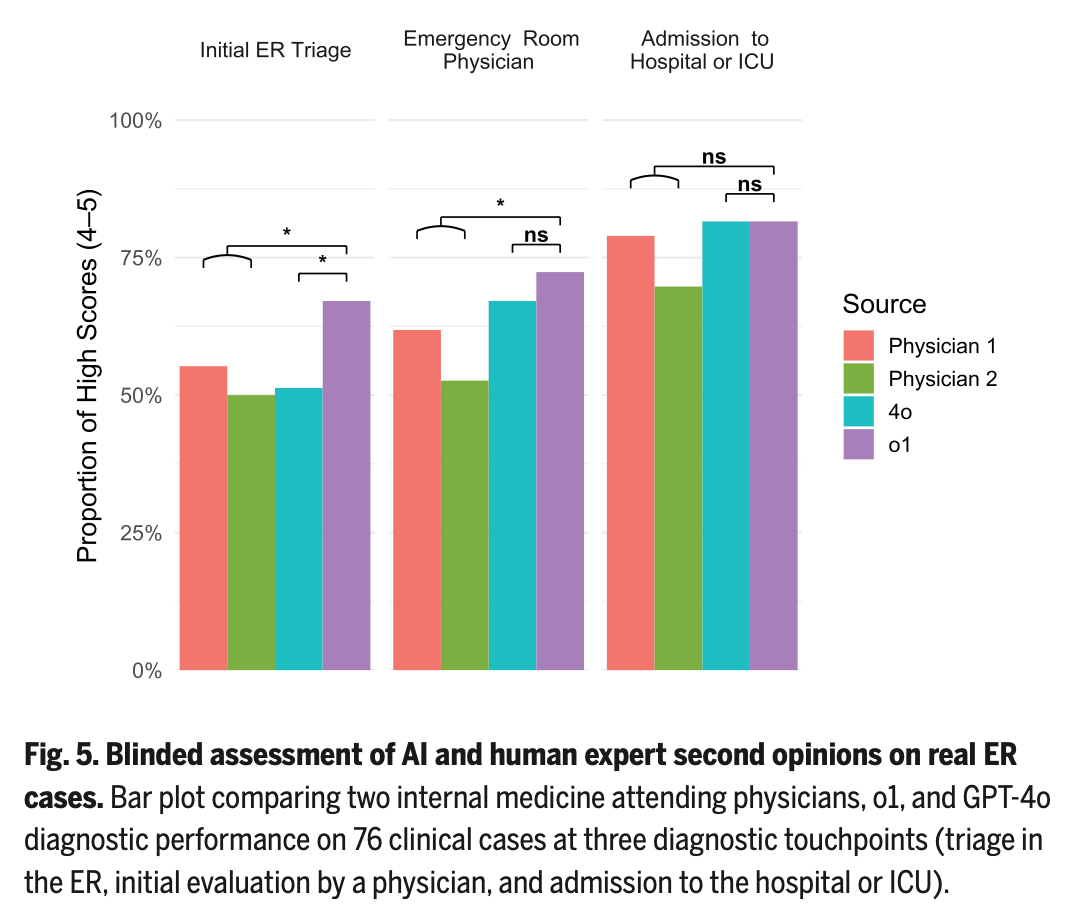

Each study “participant” performed three different generations of five potential diagnoses, one at each stage of information availability. These stages were the clinical content available as generated by nurse triage, after an ED physician had documented the encounter, and then upon admission to the hospital. Additional physician members of the study team scored each list of possible diagnoses as the percentage having exact or near-exact correct diagnosis (“Bond Score”). Purple bar (o1-preview) wins!

Of course, 1) these are what internal medicine clinicians made out of emergency department clinical data, 2) the “exact diagnosis” is the not the intent of ED information gathering, it is exclusion of immediately sinister conditions and early treatment for prevention of ongoing morbidity/mortality, 3) the entire foundation of this demonstration is the information produced by the expert evaluation, management, and cognitive work of human emergency physicians, and 4) sure, neat trick, but another contrived experiment in silico, not in Real Life.

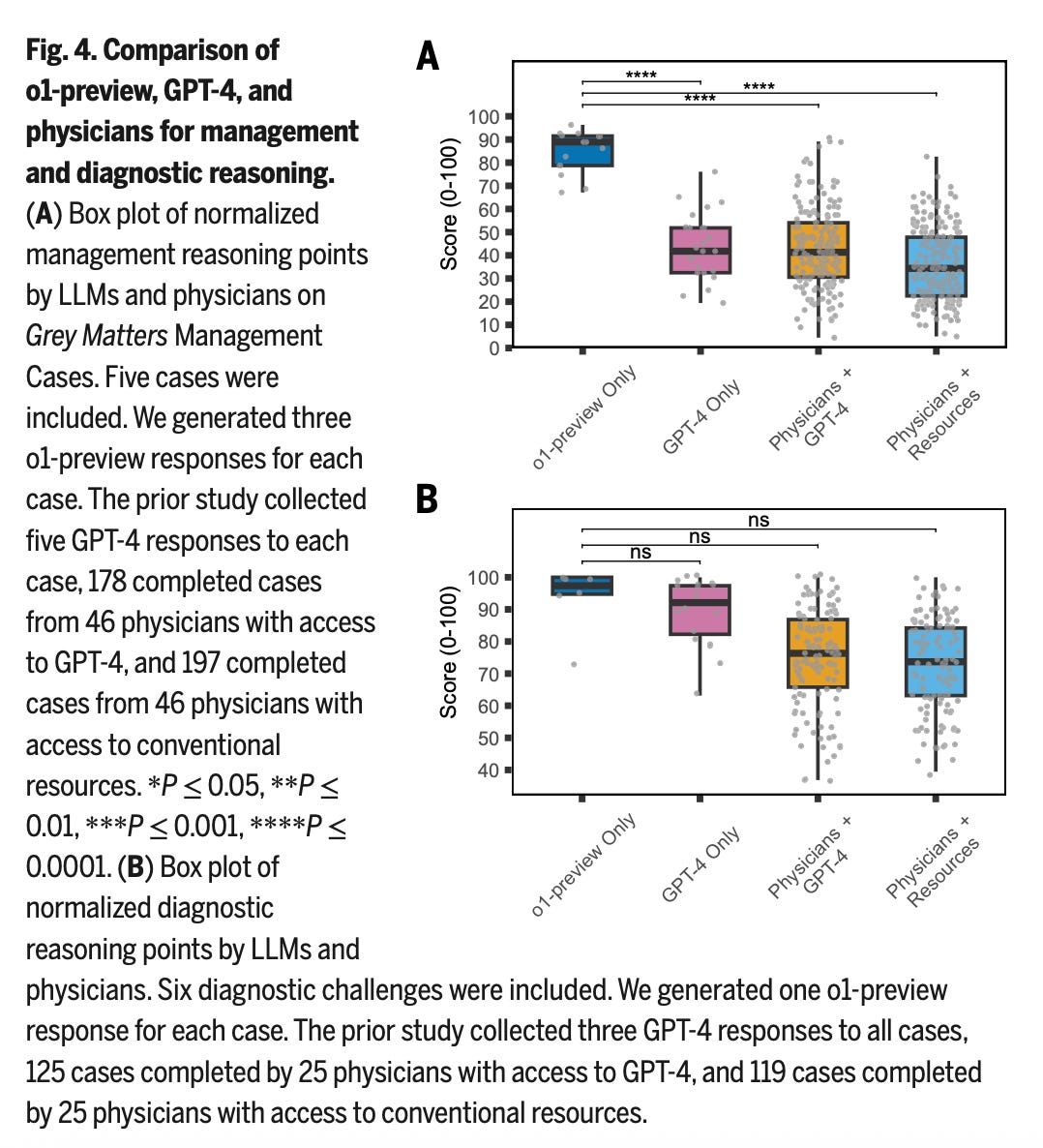

With predictable findings, they’ve also fed o1-preview NEJM Healer cases, Grey Matters cases, and Clinicopathological Conferences and compared them with previous 4o results. Another generation of models, another incremental improvement in performance:

It also ought to be noted – o1 is last year’s OpenAI flagship, whereas we’re well beyond that today. OpenAI o1 is also just a “general” LLM, rather than one specifically tuned or trained for optimal performance on clinical content.

As for the calls for sunsetting of clinical autonomy, we’re not quite yet there – but the transformation is well in progress. Even if there are ways to nitpick these results, the frog is already grasping at our heels.