More On AI Scribe Note Quality

Wait ... are AI scribes bad now?

In the same vein as some recent reality-check results for AI ambient scribes, this is another article embodying how the rose-colored glasses are finally coming off.

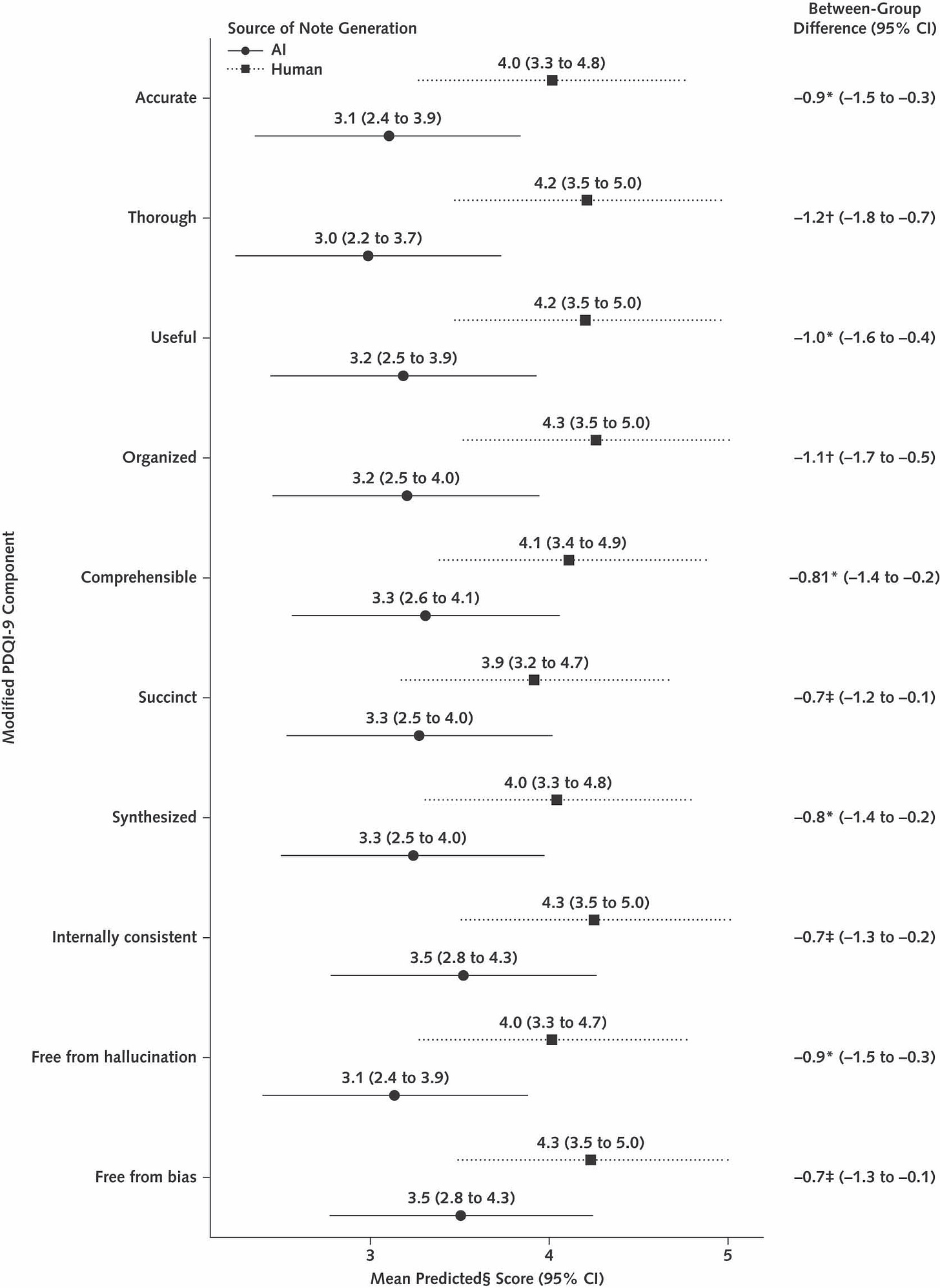

This is a report generated secondhand from a “national technology sprint” at the Veterans Health Administration in which 11 scribe vendors were put through their paces on five simulated case recordings. In this report, we see the modified PDQI-9 scores for the pooled cohort of AI scribe notes, as compared with scoring for a set of human clinician notes generated off the same recordings.

Humans win:

As with every study, there are quirks reducing internal and external validity. These are recordings of “simulated” patient encounters using standardized patients off an illness script, and the Hawthorne effect will bias the humans involved to write higher-quality notes than usual. However, there are some other interesting observations: one of the encounters had a fair bit of background noise, while in another encounter both the patient and clinician were wearing masks. The ambient scribes performed the worst on these, further demonstrating the need for pristine audio to minimize transcription degradation.

AI ambient scribes are not as magical as the vendors would have you believe, but they’re also not as terrible as some evaluations might show. They’re simply tools requiring experience and adaptation in order to maximize their capability and value, and their incremental benefit is not evenly distributed.